Favorites

FINN has this feature where users can mark classified ads as a favorite, making it easy to come back to certain ads later. The feature has been around quite a while, and the systems involved, both frontend and backend were becoming quite hard to maintain. So the AdView-team set out to redesign the whole stack. This blog post will focus on the backend API, which is written in Haskell.

Haskell

Haskell is a purely functional programming language, with a powerful type system. The ability to express intent using types brings correctness, and the composition of a large program as small, independent building blocks makes it easy to reason about the code.

A large ecosystem of production grade libraries are available from Hackage. We make use of Servant (API), Aeson (JSON), Hasql (postgres) and many more. Servant is a type-level API, meaning the “sum” of all our endpoints become a distinct type. This, in turn, means that refactoring our API gives us heavy compiler assistance. More than once during development, we had the need to do major changes in the endpoints (verbs, paths, request body, responses) and found that once every compilation error was resolved, the new API was working correctly.

To manage external packages, build and test code, we use Stack, because of its familiarity to maven, gradle and sbt-users. stack build will compile the project, stack test run all the tests. Stack pulls down Glasgow Haskell Compiler (“GHC”) along with required libraries. It also supports building and running of the final program in Docker.

Now Docker is important, because of the cloud infrastructure in FINN; any technology running in Docker can be used as a new Micro Service.

Experiences

So what are the downsides to using Haskell? Well - there is really only one. We have to have a certain amount of developers, at least in our team, know Haskell. We meet this challenge in three ways. Firstly by doing a Haskell course internally at FINN. 21 of our developers have signed up for an Introductory Haskell Course from the University of Glasgow, secondly we cheated a little by simply recruiting another Haskell developer. And lastly, we will put some effort into the Oslo Haskell Meetup group, hopefully spawning even more Haskellers.

Really? No more downsides? Well, it should be mentioned that our build-times on Travis CI (self-hosted) are not super-awesome-great. We are currently looking at 8-9 minutes for a complete build (including integration tests). The Stack build tool uses Docker for every task, and some times needs to pull a new version of an image. This hurts build times, but we can live with this as it gives us benefits in terms of isolation.

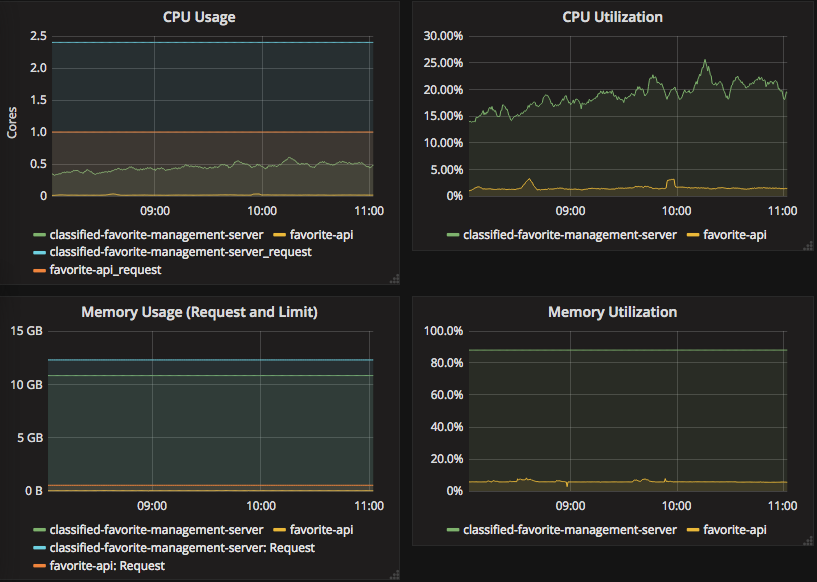

As for performance, the Warp web server is doing an excellent job of spawning lightweight threads and keeping CPU and memory usage low. An indication of memory and CPU usage is given below. Note that the old API still have way more traffic, so a direct comparison is very unfair!

classified-favorite-management-server is the old API (Java, hence the long name).

You can barely see the 32 MB memory footprint of the new API in the graphs!

Check it out

We are really looking forward to putting more load on our new API, and doing more Haskell in the future. You can check out the redesign of favorites for yourself. And if you would like to learn some Haskell, a great starting point is Learn You A Haskell For Great Good